I talk about NBA impact metrics a lot; and as most familiar with my content know, I’ve even created a few. Now, I’m ready to add another player to the mix: “Cryptbeam Plus/Minus” (CrPM). With the metric’s namesake being the title of this website, CrPM joins a long line of composite metrics that aim to identify the difference-makers of the sport through quantitative analysis. Why? As the creator of Player-Tracking Plus/Minus (PTPM), Andrew Johnson, once said: “Because what the world needs now is another all in one basketball player metric.” [1]

Overview

CrPM draws most of its inspiration from two established impact metrics in Jacob Goldstein’s Player Impact Plus/Minus (PIPM) and Ben Taylor’s Augmented Plus/Minus (AuPM). The commonality of these three metrics is the shared “branch” of metric: I tend to differentiate between impact metrics by one of three types:

- Box: a composite metric that is calculated using the box score only

- Hybrid: a composite metric that is calculated using counting statistics outside the box score (e.g. player tracking and on-off data) — but may also include the box score

- APMs: a composite metric that uses ridge-regressed lineup data as the basis of its calculation

As for where CrPM falls under these categories, it’s formally a hybrid metric. The metric can be broken into two major component: a box score term and a plus-minus term. The box score “version” of CrPM can function as an impact metric on its own as an estimator of impact via traditionally-recorded counting stats: points, rebounds, assists, etc. Using Regularized Adjusted Plus/Minus (RAPM) as a basis, the box score is regressed onto this target to estimate a player’s per-possession impact on his team’s point differential.

Two plus-minus statistics are then added to the box-score estimate to create CrPM: on-court Plus/Minus, which is a team’s per-100 point differential (Net Rating) with a player on the floor, and on-off Plus/Minus, which subtracts the team’s Net Rating with a given player off the floor from his on-court rating. The goal of the plus-minus component is to fill in some of the gaps left in the immeasurable, e.g. what the box score can’t capture. Testing of the model did later reinforce this idea.

Regression Details

The regressions for both the box score and plus-minus variants of the metric were based on fourteen years of player-seasons from Jeremias Engelmann’s xRAPM model. (This means that, in the RAPM calculation, a player’s score is pushed toward the previous season rather than zero.) This provided a more stable base for the regression to capture a wider variety of player efficacy in shorter RAPM stints. Additionally, the penalization term for each season was homogenized to improve season-to-season interpretability.

The box score is not manipulated in the CrPM calculation outside of setting the stats as relative to league averages. The raw plus-minus terms, however, use minutes played as a stabilizer to draw results closer towards zero as playing time decreases. While some incorrectly label a larger sample size as more accurate, this adjustment serves to reduce variance in smaller samples to decrease the odds for larger error in these spots. These two branches of NBA statistics combine to create CrPM. [2]

The regressions, especially the box score ones, were all able to explain the variability in the target RAPM with a surprising degree of accuracy. The R^2 values for combined in and out-of-sample RAPM ranged from 0.725 to 0.750 for the box-score metrics and bumped up to 0.825 with plus-minus included. A large concern for most ordinary linear regressions is heteroskedasticity, which is when error rates become larger as the predicted variable increases. The breadth of performance captured in the RAPM target mitigated this, and CrPM serves as an accurate indicator of impact for average players and MVP players alike.

- Target response of RAPM was collected from Jeremias Engelmann’s website.

- Box scores and plus-minus data were collected from Basketball-Reference.

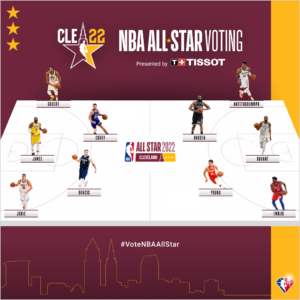

Current MVP Ladder

Because CrPM serves as an indicator of a player’s impact, it can do a solid job of identifying viable candidates for the league’s MVP award. Through the 18th of December, here are the top-10 players of the 2022 season with at least 540 minutes played per the metric:

1. Nikola Jokic (+11.8)

The reigning MVP has started his follow-up campaign with a bang, and looks to be the frontrunner to snag the award again. His placement in the box-score component of the metric (+12.4 per 100) would be first among all players since 1997 with at least 1,500 minutes played during the season. Jokic’s overall score of +11.8 in CrPM is tied with LeBron James’s legendary 2009 seasons for the best regular season on record.

2. Giannis Antetokounmpo (+9.4)

Voter fatigue in recent seasons has downplayed the regular-season greatness of the Greek Freak, but he seems as good as ever according to CrPM. Each of his last three seasons are top-45 seasons since 1997 per the metric, with his dominating MVP run in 2020 ranking fourth (+10.8) among all high-minute players on record.

3. Joel Embiid (+6.6)

The metric suggests Embiid provides massive two-way value, with his marks on offense and defense being nearly identical in both the box-score and plus-minus versions of the metric. Despite a shooting slump to start the season that followed an exceptional mid-range campaign for the big man, he still adds a ton of value in Philadelphia. So far, the 76ers outscore opponents by +4.3 per 100 when Embiid is on the floor and are +9.2 points better with him in the lineup.

4. Rudy Gobert (+6.5)

It’s not an NBA regular season nowadays without the analytics looking upon Rudy Gobert with perhaps a little too much enthusiasm. Regardless, he’s still a surefire candidate for the DPOY Award and a probable finalist, if not winner. Almost all of his impact comes from the defense end, adding only +0.4 points per 100 in the offensive component of CrPM, with the remaining +6.1 points coming from his all-time-level rim protection and paint anchoring.

5. Stephen Curry (+5.7)

Curry’s plus-minus portfolio isn’t as transcendent as it was during his all-time seasons in the mid-2010s, but a revamped Golden State roster that amplifies his strengths boosts those numbers into the upper echelon of NBA superstars once more. The Warriors outscore opponents by +14.1 points per 100 with Curry on the floor and are +12 points better with him on the court. While the box score can’t capture all of the value he brings to the table, Curry still looks like one of the best players in the league according to CrPM.

6. Kevin Durant (+5.5)

Curry’s former teammate is another shooting savant who continues to string together legendary offensive seasons with league-best marks in both scoring volume and efficiency. He’s arguably the greatest mid-range shooter in NBA history and makes Brooklyn’s offense great with these shots alone. Speaking of, the Nets look like a surefire title contender with Durant on the floor, when they outscore opponents by +7.3 points per 100 possessions.

7. Clint Capela (+4.6)

While I don’t think Clint Capela has been a top-10 player in the league this year, his placement illustrates arguably the biggest sign of caution I’d address when using CrPM. Because the box-score component receives a whole lot of the weight in the plus-minus-included formula, the metric is overly sensitive to defensive rebounds and stocks. Especially in the modern league, when spacing affects statistics like rebounds to a higher degree than ever, Capela is a case of, while being a valuable player nonetheless, a stylistic disadvantage of CrPM.

8. Jarrett Allen (+4.5)

I’m similarly not as fond with Allen’s actual ranking among the league’s best, but he’s been sneakily good this season. The Cavaliers have clearly stocked up on Michael’s Secret Stuff for 2022, because they’ve been better than the Nets with Durant when they put Jarrett Allen in the game. Cleveland outscores its opponents by +8.4 points per 100 with Allen in the lineup! One of the sport’s emerging two-way talents receives well-deserved credit in the analytics.

9. Karl-Anthony Towns (+4.4)

Towns has played like an all-league star for a while, and now that his longtime home of Minnesota has found its footing in 2022, his value is more evident than ever. CrPM views Towns as a clear value-add on offense and defense, and this is enough to make him look like a candidate for the All-NBA second team this year. He’s one of the On-Off kings so far, posting a +13.1 Net Plus/Minus in a similar vain to other top-player candidates.

10. Jimmy Butler (+4.4)

If this list were box-score only, Butler would be several spots higher with his +6.3 score in Box CrPM; however, plus-minus doesn’t look upon him like the other players on this list entering 2022. Miami plays like a surefire postseason team with him on the court, but they actually perform +2.4 points better with him off the floor! While this is very, very likely just noise that accompanies most plus-minus data like this, it doesn’t serve as a very good indicator of his impact, thus compressing his score in plus-minus-included CrPM.

Full 2022 Leaderboard

| Player | Tm | G | MP | O CrPM | D CrPM | CrPM |

| Nikola Jokić | DEN | 24 | 783 | 7.9 | 3.9 | 11.8 |

| Giannis Antetokounmpo | MIL | 26 | 849 | 5.5 | 3.9 | 9.4 |

| Joel Embiid | PHI | 19 | 629 | 3.3 | 3.3 | 6.6 |

| Rudy Gobert | UTA | 29 | 920 | 0.4 | 6.1 | 6.5 |

| Stephen Curry | GSW | 28 | 962 | 5.2 | 0.5 | 5.7 |

| Kevin Durant | BRK | 27 | 1000 | 5.3 | 0.2 | 5.5 |

| Clint Capela | ATL | 29 | 864 | 0.2 | 4.4 | 4.6 |

| Jarrett Allen | CLE | 28 | 913 | 1.9 | 2.6 | 4.5 |

| Karl-Anthony Towns | MIN | 28 | 963 | 3.0 | 1.4 | 4.4 |

| Jimmy Butler | MIA | 18 | 607 | 4.2 | 0.2 | 4.4 |

| LaMelo Ball | CHO | 25 | 828 | 3.2 | 1.2 | 4.4 |

| DeMar DeRozan | CHI | 24 | 846 | 4.9 | -0.6 | 4.3 |

| Trae Young | ATL | 29 | 990 | 6.2 | -2.0 | 4.2 |

| Montrezl Harrell | WAS | 31 | 799 | 3.3 | 0.9 | 4.2 |

| Myles Turner | IND | 30 | 887 | -0.6 | 4.6 | 4.1 |

| John Collins | ATL | 29 | 939 | 2.4 | 1.5 | 3.9 |

| LeBron James | LAL | 18 | 667 | 3.1 | 0.7 | 3.8 |

| Chris Paul | PHO | 28 | 906 | 3.6 | 0.3 | 3.8 |

| Jusuf Nurkić | POR | 30 | 752 | 0.6 | 3.2 | 3.8 |

| Dejounte Murray | SAS | 28 | 963 | 2.2 | 1.6 | 3.8 |

| Donovan Mitchell | UTA | 28 | 910 | 4.1 | -0.6 | 3.5 |

| Jonas Valančiūnas | NOP | 31 | 980 | 1.5 | 2.0 | 3.5 |

| Domantas Sabonis | IND | 31 | 1057 | 1.9 | 1.5 | 3.4 |

| Jrue Holiday | MIL | 25 | 819 | 3.0 | 0.4 | 3.3 |

| Kristaps Porziņģis | DAL | 21 | 634 | 1.4 | 1.9 | 3.3 |

| Jaren Jackson Jr. | MEM | 29 | 787 | 0.2 | 3.1 | 3.3 |

| Jayson Tatum | BOS | 30 | 1095 | 2.3 | 0.9 | 3.2 |

| Andre Drummond | PHI | 29 | 571 | -2.8 | 6.0 | 3.2 |

| Fred VanVleet | TOR | 28 | 1060 | 2.7 | 0.4 | 3.1 |

| Anthony Davis | LAL | 27 | 955 | 1.0 | 2.2 | 3.1 |

| LaMarcus Aldridge | BRK | 25 | 590 | 1.8 | 1.3 | 3.1 |

| D’Angelo Russell | MIN | 24 | 781 | 2.9 | 0.1 | 3.0 |

| Miles Bridges | CHO | 31 | 1135 | 1.9 | 1.0 | 2.9 |

| James Harden | BRK | 26 | 942 | 1.9 | 0.9 | 2.8 |

| Richaun Holmes | SAC | 22 | 596 | 1.5 | 1.3 | 2.8 |

| Al Horford | BOS | 24 | 711 | 0.2 | 2.4 | 2.6 |

| Luka Dončić | DAL | 21 | 735 | 2.3 | 0.2 | 2.5 |

| Bobby Portis | MIL | 25 | 711 | 0.5 | 2.0 | 2.5 |

| Alperen Şengün | HOU | 29 | 538 | -0.1 | 2.6 | 2.5 |

| Jarred Vanderbilt | MIN | 28 | 682 | -1.6 | 4.0 | 2.4 |

| Daniel Gafford | WAS | 28 | 601 | -1.1 | 3.4 | 2.3 |

| Damian Lillard | POR | 24 | 873 | 3.9 | -1.6 | 2.3 |

| Patrick Beverley | MIN | 21 | 552 | 1.3 | 0.8 | 2.1 |

| Ja Morant | MEM | 19 | 619 | 3.0 | -0.9 | 2.1 |

| Wendell Carter Jr. | ORL | 30 | 865 | 0.2 | 1.8 | 2.0 |

| Evan Mobley | CLE | 25 | 840 | -0.9 | 2.9 | 2.0 |

| Mike Conley | UTA | 26 | 727 | 3.2 | -1.2 | 1.9 |

| Deandre Ayton | PHO | 20 | 625 | 0.5 | 1.5 | 1.9 |

| Malcolm Brogdon | IND | 25 | 883 | 2.7 | -0.8 | 1.9 |

| Devin Booker | PHO | 21 | 676 | 3.1 | -1.2 | 1.9 |

| Robert Williams | BOS | 23 | 638 | -0.4 | 2.3 | 1.9 |

| Derrick Rose | NYK | 26 | 636 | 2.2 | -0.4 | 1.8 |

| Paul George | LAC | 24 | 861 | 0.6 | 1.2 | 1.8 |

| Darius Garland | CLE | 29 | 991 | 3.0 | -1.3 | 1.8 |

| Draymond Green | GSW | 28 | 849 | -0.9 | 2.6 | 1.7 |

| Jakob Poeltl | SAS | 21 | 606 | 0.5 | 1.1 | 1.6 |

| Nikola Vučević | CHI | 20 | 663 | -0.9 | 2.5 | 1.6 |

| De’Anthony Melton | MEM | 27 | 654 | -0.6 | 2.2 | 1.6 |

| Bam Adebayo | MIA | 18 | 592 | 0.4 | 1.1 | 1.5 |

| Ricky Rubio | CLE | 31 | 880 | 1.2 | 0.3 | 1.5 |

| Brandon Ingram | NOP | 24 | 855 | 2.3 | -0.8 | 1.5 |

| Cole Anthony | ORL | 23 | 785 | 1.9 | -0.5 | 1.4 |

| Alex Caruso | CHI | 24 | 685 | -0.1 | 1.5 | 1.4 |

| Mo Bamba | ORL | 27 | 774 | -2.4 | 3.8 | 1.4 |

| Monte Morris | DEN | 29 | 875 | 2.4 | -1.1 | 1.3 |

| Zach LaVine | CHI | 27 | 948 | 3.1 | -1.8 | 1.3 |

| Shai Gilgeous-Alexander | OKC | 25 | 873 | 1.9 | -0.6 | 1.2 |

| Jalen Brunson | DAL | 27 | 795 | 2.7 | -1.5 | 1.2 |

| Pascal Siakam | TOR | 17 | 586 | 1.1 | 0.1 | 1.2 |

| Mitchell Robinson | NYK | 27 | 653 | -1.5 | 2.6 | 1.1 |

| Aaron Gordon | DEN | 29 | 945 | 1.2 | -0.2 | 1.1 |

| Devin Vassell | SAS | 23 | 559 | 0.1 | 1.0 | 1.1 |

| Tyus Jones | MEM | 30 | 634 | 1.8 | -0.7 | 1.1 |

| Cedi Osman | CLE | 25 | 552 | 1.3 | -0.2 | 1.1 |

| Kevon Looney | GSW | 30 | 564 | -1.0 | 2.0 | 1.0 |

| Deni Avdija | WAS | 31 | 672 | -1.3 | 2.3 | 1.0 |

| Christian Wood | HOU | 28 | 888 | -1.0 | 2.0 | 1.0 |

| Desmond Bane | MEM | 30 | 863 | 1.3 | -0.3 | 1.0 |

| Anthony Edwards | MIN | 28 | 1006 | 0.5 | 0.5 | 1.0 |

| Khris Middleton | MIL | 21 | 650 | 1.0 | 0.0 | 1.0 |

| Immanuel Quickley | NYK | 29 | 633 | 1.8 | -0.9 | 0.9 |

| Andrew Wiggins | GSW | 29 | 901 | 1.7 | -0.8 | 0.9 |

| Gary Trent Jr. | TOR | 27 | 933 | 0.6 | 0.2 | 0.8 |

| Ivica Zubac | LAC | 30 | 742 | -1.2 | 1.9 | 0.7 |

| Kyle Anderson | MEM | 26 | 565 | -1.3 | 1.9 | 0.6 |

| Alec Burks | NYK | 29 | 774 | 0.4 | 0.3 | 0.6 |

| Caris LeVert | IND | 23 | 668 | 1.3 | -0.7 | 0.6 |

| Cody Martin | CHO | 29 | 799 | 0.1 | 0.4 | 0.6 |

| Tyrese Haliburton | SAC | 28 | 930 | 0.1 | 0.4 | 0.5 |

| Larry Nance Jr. | POR | 30 | 650 | -0.9 | 1.4 | 0.5 |

| Scottie Barnes | TOR | 27 | 973 | 0.1 | 0.4 | 0.5 |

| Tyrese Maxey | PHI | 28 | 969 | 1.9 | -1.5 | 0.5 |

| Josh Hart | NOP | 24 | 756 | 0.1 | 0.3 | 0.4 |

| Gordon Hayward | CHO | 31 | 1052 | 1.4 | -1.0 | 0.4 |

| CJ McCollum | POR | 24 | 848 | 1.4 | -1.1 | 0.3 |

| Mikal Bridges | PHO | 28 | 963 | 0.3 | 0.0 | 0.3 |

| Tobias Harris | PHI | 21 | 720 | 0.7 | -0.4 | 0.3 |

| Lonzo Ball | CHI | 27 | 958 | -0.9 | 1.2 | 0.3 |

| Jordan Clarkson | UTA | 29 | 730 | 1.3 | -1.0 | 0.2 |

| Steven Adams | MEM | 30 | 754 | -1.3 | 1.5 | 0.2 |

| OG Anunoby | TOR | 16 | 588 | 0.5 | -0.3 | 0.2 |

| Marcus Smart | BOS | 29 | 991 | -0.3 | 0.4 | 0.2 |

| Kelly Oubre Jr. | CHO | 31 | 903 | 1.1 | -1.0 | 0.1 |

| Lauri Markkanen | CLE | 22 | 664 | 0.3 | -0.2 | 0.1 |

| Kyle Lowry | MIA | 28 | 962 | 0.9 | -0.9 | 0.1 |

| Devonte’ Graham | NOP | 28 | 876 | 1.3 | -1.2 | 0.1 |

| Will Barton | DEN | 25 | 825 | 0.6 | -0.6 | 0.0 |

| Grayson Allen | MIL | 30 | 875 | 0.7 | -0.7 | 0.0 |

| Derrick White | SAS | 28 | 875 | 0.3 | -0.4 | -0.1 |

| T.J. McConnell | IND | 24 | 581 | 0.4 | -0.5 | -0.1 |

| Franz Wagner | ORL | 31 | 994 | 0.3 | -0.4 | -0.1 |

| Jerami Grant | DET | 24 | 797 | -0.1 | 0.0 | -0.2 |

| Danny Green | PHI | 23 | 558 | -2.0 | 1.8 | -0.2 |

| Russell Westbrook | LAL | 30 | 1078 | 0.2 | -0.4 | -0.2 |

| Mason Plumlee | CHO | 22 | 562 | -1.6 | 1.5 | -0.2 |

| Patty Mills | BRK | 30 | 905 | 1.6 | -1.8 | -0.2 |

| Luke Kennard | LAC | 30 | 870 | 0.8 | -1.1 | -0.2 |

| Herb Jones | NOP | 28 | 766 | -1.7 | 1.4 | -0.3 |

| Bradley Beal | WAS | 28 | 1005 | 1.6 | -1.9 | -0.3 |

| Jae Crowder | PHO | 28 | 789 | -1.5 | 1.2 | -0.3 |

| Nassir Little | POR | 26 | 603 | -1.6 | 1.3 | -0.3 |

| Keldon Johnson | SAS | 27 | 829 | 0.1 | -0.5 | -0.4 |

| George Hill | MIL | 26 | 686 | 0.1 | -0.5 | -0.4 |

| Royce O’Neale | UTA | 27 | 833 | -1.4 | 0.9 | -0.5 |

| Bojan Bogdanović | UTA | 29 | 864 | 1.8 | -2.2 | -0.5 |

| Jordan Poole | GSW | 28 | 860 | 0.8 | -1.2 | -0.5 |

| Reggie Jackson | LAC | 30 | 992 | 1.1 | -1.6 | -0.5 |

| Gabe Vincent | MIA | 27 | 532 | 0.3 | -0.8 | -0.5 |

| Jae’Sean Tate | HOU | 30 | 844 | -0.8 | 0.2 | -0.6 |

| Cade Cunningham | DET | 23 | 745 | -1.7 | 1.1 | -0.6 |

| Lonnie Walker | SAS | 27 | 611 | -0.1 | -0.5 | -0.6 |

| Norman Powell | POR | 26 | 825 | 1.0 | -1.6 | -0.6 |

| Terry Rozier | CHO | 22 | 708 | 0.5 | -1.1 | -0.7 |

| Bogdan Bogdanović | ATL | 20 | 564 | 0.5 | -1.2 | -0.7 |

| Josh Richardson | BOS | 22 | 555 | 0.1 | -0.8 | -0.7 |

| Shake Milton | PHI | 25 | 636 | 0.0 | -0.8 | -0.8 |

| Carmelo Anthony | LAL | 30 | 828 | -0.3 | -0.5 | -0.8 |

| Georges Niang | PHI | 28 | 662 | 0.2 | -1.0 | -0.8 |

| Anfernee Simons | POR | 26 | 618 | 1.3 | -2.2 | -0.8 |

| Harrison Barnes | SAC | 25 | 836 | 0.2 | -1.1 | -0.9 |

| De’Aaron Fox | SAC | 29 | 993 | 0.5 | -1.4 | -0.9 |

| Matisse Thybulle | PHI | 23 | 555 | -3.6 | 2.7 | -0.9 |

| Danilo Gallinari | ATL | 26 | 568 | -0.1 | -0.8 | -0.9 |

| Isaiah Stewart | DET | 26 | 668 | -2.7 | 1.8 | -0.9 |

| Cameron Johnson | PHO | 28 | 682 | -0.6 | -0.3 | -0.9 |

| Raul Neto | WAS | 30 | 607 | -0.2 | -0.8 | -0.9 |

| Seth Curry | PHI | 27 | 929 | 1.1 | -2.0 | -1.0 |

| Pat Connaughton | MIL | 32 | 929 | -0.4 | -0.6 | -1.0 |

| Dennis Schröder | BOS | 27 | 894 | 0.8 | -1.9 | -1.0 |

| Kevin Huerter | ATL | 28 | 774 | 0.2 | -1.3 | -1.0 |

| Malik Monk | LAL | 28 | 666 | -0.2 | -0.8 | -1.0 |

| Tyler Herro | MIA | 25 | 822 | 0.5 | -1.5 | -1.1 |

| Buddy Hield | SAC | 30 | 857 | 0.0 | -1.1 | -1.2 |

| Julius Randle | NYK | 30 | 1063 | -1.3 | 0.0 | -1.2 |

| Joe Ingles | UTA | 29 | 718 | 0.2 | -1.5 | -1.3 |

| Spencer Dinwiddie | WAS | 26 | 762 | 0.2 | -1.4 | -1.3 |

| Grant Williams | BOS | 28 | 618 | -1.0 | -0.3 | -1.3 |

| Tim Hardaway Jr. | DAL | 28 | 878 | -0.1 | -1.2 | -1.3 |

| Precious Achiuwa | TOR | 22 | 579 | -2.8 | 1.3 | -1.4 |

| Terance Mann | LAC | 29 | 829 | -0.5 | -1.1 | -1.6 |

| Josh Giddey | OKC | 26 | 779 | -2.2 | 0.6 | -1.6 |

| Chris Duarte | IND | 29 | 843 | -1.0 | -0.6 | -1.6 |

| P.J. Tucker | MIA | 30 | 856 | -1.2 | -0.5 | -1.6 |

| Bruce Brown | BRK | 24 | 539 | -2.5 | 0.9 | -1.7 |

| Kyle Kuzma | WAS | 29 | 937 | -2.3 | 0.6 | -1.7 |

| Dorian Finney-Smith | DAL | 28 | 899 | -1.8 | 0.0 | -1.8 |

| Davion Mitchell | SAC | 29 | 744 | -0.3 | -1.6 | -1.9 |

| Robert Covington | POR | 30 | 822 | -4.2 | 2.2 | -2.0 |

| Luguentz Dort | OKC | 26 | 841 | -0.3 | -1.7 | -2.0 |

| Eric Gordon | HOU | 25 | 745 | 0.0 | -2.0 | -2.0 |

| Furkan Korkmaz | PHI | 27 | 600 | -1.0 | -1.1 | -2.1 |

| Nickeil Alexander-Walker | NOP | 31 | 877 | -1.3 | -0.8 | -2.1 |

| Kevin Porter Jr. | HOU | 19 | 574 | -2.2 | 0.1 | -2.2 |

| Dwight Powell | DAL | 28 | 536 | -1.8 | -0.4 | -2.2 |

| Duncan Robinson | MIA | 30 | 849 | -1.3 | -0.9 | -2.2 |

| Isaac Okoro | CLE | 23 | 663 | -1.3 | -1.0 | -2.3 |

| Jeff Green | DEN | 29 | 732 | -0.9 | -1.4 | -2.3 |

| Eric Bledsoe | LAC | 30 | 787 | -2.4 | -0.1 | -2.5 |

| Chuma Okeke | ORL | 25 | 562 | -3.5 | 1.1 | -2.5 |

| Cam Reddish | ATL | 25 | 558 | -1.5 | -1.0 | -2.5 |

| Landry Shamet | PHO | 27 | 562 | -0.6 | -2.0 | -2.5 |

| RJ Barrett | NYK | 25 | 784 | -1.4 | -1.2 | -2.5 |

| Kentavious Caldwell-Pope | WAS | 31 | 906 | -2.0 | -0.5 | -2.6 |

| Justin Holiday | IND | 25 | 693 | -1.2 | -1.5 | -2.7 |

| Darius Bazley | OKC | 28 | 765 | -4.5 | 1.9 | -2.7 |

| Frank Jackson | DET | 28 | 620 | -0.6 | -2.1 | -2.7 |

| Killian Hayes | DET | 23 | 602 | -2.9 | 0.1 | -2.9 |

| Facundo Campazzo | DEN | 28 | 567 | -1.9 | -1.0 | -2.9 |

| Jeremiah Robinson-Earl | OKC | 28 | 608 | -2.9 | 0.0 | -3.0 |

| Jalen Suggs | ORL | 21 | 583 | -3.1 | -0.1 | -3.2 |

| Saddiq Bey | DET | 28 | 897 | -2.2 | -1.1 | -3.3 |

| Evan Fournier | NYK | 30 | 857 | -1.6 | -1.8 | -3.4 |

| Jaden McDaniels | MIN | 26 | 646 | -3.9 | 0.5 | -3.4 |

| Malik Beasley | MIN | 29 | 750 | -1.7 | -1.8 | -3.4 |

| R.J. Hampton | ORL | 29 | 536 | -2.4 | -1.1 | -3.5 |

| Garrett Temple | NOP | 29 | 532 | -4.2 | 0.5 | -3.6 |

| Avery Bradley | LAL | 26 | 599 | -3.1 | -0.9 | -4.0 |

| Gary Harris | ORL | 24 | 651 | -2.1 | -2.2 | -4.3 |

| Terrence Ross | ORL | 28 | 715 | -2.4 | -2.3 | -4.8 |

| Reggie Bullock | DAL | 27 | 646 | -3.1 | -1.7 | -4.8 |

| Jalen Green | HOU | 18 | 555 | -3.9 | -2.6 | -6.5 |

Updated Dec. 18, 2021

[1] Read Johnson’s article, a primer on PTPM, here.

[2] Because Basketball-Reference doesn’t report plus-minus for offense and defense as liberally as it does combined plus-minus, the offensive / defensive splits for CrPM are slightly less accurate than its total version.